How We Built BrandForge with Google AI Models and Cloud Services

At BroadComms, our work sits at the intersection of enterprise AI architecture and real-world product development. We design and deploy agentic AI systems built on multi-provider foundations and occasionally, we build something that puts every principle we preach directly into practice. BrandForge is exactly one of those.

BrandForge is our multimodal marketing campaign generation platform, an AI Creative Director that takes a single campaign brief and brand kit, then orchestrates multiple specialized AI agents to generate a complete, publish-ready campaign across 14 channels in minutes. Images. Videos. Audio ads. Platform-specific copy. All streamed live onto a mosaic canvas as each asset materializes.

We built BrandForge as our submission to the Google Gemini Live Agent Challenge, in the Creative Storyteller category. This post walks through the architecture, the engineering decisions, the technical lessons we learned the hard way, and what this project revealed about the state of multimodal AI orchestration in 2026.

The Problem We Set Out to Solve

Modern marketing is no longer a single-channel activity. For every product launch, announcement, or campaign, marketers, founders, startups, and small teams are expected to produce a continuous stream of platform-specific content across LinkedIn, Instagram, Twitter/X, Facebook, TikTok, YouTube, email, blogs, Reddit, Pinterest, Threads, Spotify, and more.

Each platform demands its own format, tone, visual dimensions, and storytelling style. A single campaign can explode into dozens of content assets, captions, images, thumbnails, short-form videos, reels, blog articles, newsletters, and audio ads, all requiring repurposing and optimization for different platforms and audience behaviours.

Despite having a clear brief and brand kit, coordinating all this forces teams into days of fragmented workflows: jumping between tools, reviewing multiple drafts, and repeatedly adapting the same content for each channel. For small teams and solo entrepreneurs operating without agency budgets, this process is exhausting, expensive, and nearly impossible to scale.

Most existing AI content tools only compound the fragmentation, they generate a single asset for a single platform at a time, with no strategic cohesion across the broader campaign. The creative context gets lost in the repetition.

The opportunity we saw: what if a single AI creative director could hold the entire strategic context for a campaign and orchestrate every channel simultaneously from a single brief?

What Does It Do?

You give BrandForge a campaign brief for example, "Summer sale campaign for EcoHydrate Pro, our eco-friendly water bottle, targeting health-conscious millennials who live an active lifestyle" along with a brand kit containing your logo, product images, colors, fonts, and tone of voice. In return, you get a complete marketing campaign across 14 channels, with every asset generated, streamed live, and ready to publish.

At the center of the experience is a voice-controlled Live Agent powered by the Gemini Live API. It acts as your AI Creative Director. You can speak naturally to create brands, launch campaigns, iterate on specific channels, reframe the entire campaign, or navigate the platform entirely hands-free. It's not a chat interface it listens, responds, narrates what it's doing, and even sees your screen.

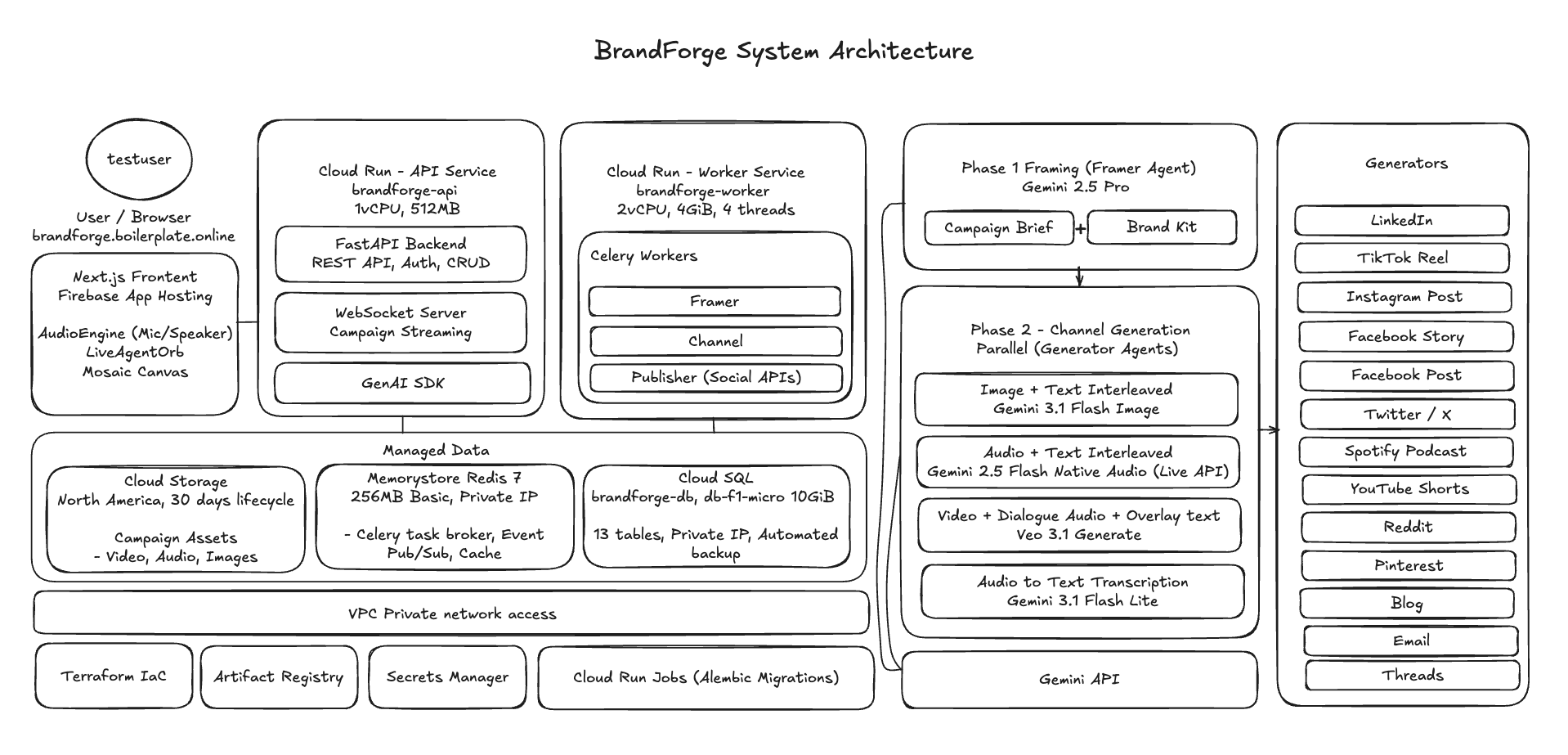

The Architecture: Two-Stage Multimodal Pipeline

The core architectural insight behind BrandForge is a clean separation between strategic planning and parallel execution. We call it the Framer/Generator pipeline.

Stage 1: The Framer Agent

The Framer is the strategic brain. It analyzes the campaign brief alongside the brand kit and produces a structured creative plan in JSON a comprehensive document containing the campaign headline, tagline, campaign story, creative direction, channel-specific copy for all 14 channels, call-to-action variations, voiceover script, video prompt with scene breakdowns, and a hero image.

We use gemini-2.5-pro for the Framer because deep reasoning matters here. The Framer needs to think in terms of campaign strategy, brand voice, and platform nuance, not just generate text. The hero image is generated in parallel using gemini-3.1-flash-image-preview, establishing the visual identity that will anchor every subsequent channel asset.

Stage 2: 14 Parallel Channel Generators

Once the Framer completes, we dispatch all 14 channel generators simultaneously via Celery task groups. Each generator is a Python class that knows its platform inside out, inheriting from a BaseChannelGenerator and registered via a clean decorator pattern. Adding a new channel means writing one class and decorating it. No configuration files, no factory updates, no manual wiring.

The 14 generators break down across three modalities:

- 9 Image + Text channels LinkedIn, Twitter/X, Facebook Post, Email, Blog, Reddit, Pinterest, Threads, Instagram Post all using

gemini-3.1-flash-image-previewfor fast, brand-consistent image generation with text overlays. - 4 Video channels YouTube, TikTok, Instagram Reels, Facebook Stories using

veo-3.1-fast-generate-previewfor short-form video with dialogue audio and overlay text. - 1 Audio channel Spotify using

gemini-2.5-flash-native-audio-preview-latestfor voice synthesis to create a spoken audio ad, withgemini-3.1-flash-lite-previewhandling the cover image and automatic transcription.

The Seven Google AI Models Behind BrandForge

This is what makes BrandForge a genuine multimodal orchestration system. We don't call one model and hope it handles everything. We coordinate seven distinct models, each selected for its specific capability profile:

| Model | Role in BrandForge |

|---|---|

gemini-2.5-pro | Framer Agent — deep strategic campaign planning with structured JSON output |

gemini-3-pro-image-preview (hero) | Hero image generation — the visual centrepiece of the campaign |

gemini-3.1-flash-image-preview (channels) | 9 image channel generators + Spotify cover art |

veo-3.1-fast-generate-preview | 4 video channel generators (YouTube, TikTok, Reels, Stories) |

gemini-2.5-flash-native-audio-preview-12-2025 | Spotify audio ad voice synthesis |

gemini-3.1-flash-lite-preview | Spotify audio transcription |

gemini-2.5-flash-native-audio-preview-latest | Live Agent — voice-controlled Creative Director (Live API) |

Every model is accessed through the Google GenAI SDK (google-genai), which provides a unified genai.Client interface. Same client, same interface, different model string, different modality. Text, images, video, audio, voice for the full multimodal stack.

This is the multi-provider architecture principle applied within a single provider's ecosystem: match the model to the task, and let the orchestration layer handle the coordination.

Technical Lessons We Learned

Building a system like this in a compressed hackathon timeline means discovering edge cases fast. Two techniques we developed proved especially consequential.

1. Hex-to-Color-Name Sanitization for Veo

Video generation with Veo taught us an important lesson: when you include hex color codes like #2E8B57 in a video prompt, Veo interprets them as literal text and renders the string visibly in the video. Your brand's "sea green" primary color becomes the string #2E8B57 floating on screen.

We built a sanitization layer that converts hex codes to human-readable color names before they reach Veo translating #2E8B57 to "sea green" so the model understands it as a color instruction, not a text element to render.

2. Multipart Brand Prompting

The second lesson is less about a bug fix and more about a fundamental shift in prompting strategy. Instead of describing a brand's logo in text "a green water droplet with the text EcoHydrate in sans-serif" we send the actual logo image as an inline part of the multipart prompt alongside the text instructions.

Gemini's multimodal understanding sees the logo, reads its style, and incorporates it naturally into every generated asset. The difference in brand consistency between text-described logos and image-provided logos is dramatic. This is why BrandForge requires a brand kit upload rather than a text description the quality delta is not marginal; it is the whole point.

The Voice-Controlled Live Agent

The generation pipeline is compelling on its own, but the Live Agent is where BrandForge becomes a genuinely different product category.

We built a voice-first Creative Director using the Gemini Live API with gemini-2.5-flash-native-audio-preview. It speaks with the Kore voice, listens in real time, processes audio natively without speech-to-text intermediaries, and exposes 26 function declarations across three domains:

- Brand Kit Management (5 functions) create, read, update, delete, and list brand kits

- Campaign Lifecycle (12 functions) create, generate, iterate, reframe, reset, regenerate channels, check status, publish

- UI Navigation (9 functions) navigate pages, open channel details, capture screenshots, click elements, fill inputs

You can say: "Create a new brand kit for Bean & Brew coffee shop with earth tones and a warm, conversational tone." The agent asks one question at a time from primary color, secondary color, fonts and builds the brand kit through natural conversation.

You can say: "Generate a summer campaign targeting young professionals." The agent dispatches the Framer, narrates the progress as channels complete, and invites you to review the mosaic canvas as it fills.

You can say: "Make the LinkedIn post more executive and less casual." The agent calls the iterate campaign function on that specific channel and regenerates only what you asked to change.

The system instruction that shapes this persona is over 2,000 lines of code defining conversational flow rules, multi-step workflow protocols, when to ask a follow-up question versus when to proceed, how to handle ambiguous commands, and how to maintain the Creative Director personality across a long session. Voice interaction is fundamentally different from text chat. Users speak in fragments, interrupt themselves, and expect the agent to infer context from what it heard two exchanges ago.

Session Resumption for Long Creative Sessions

The Gemini Live API has an approximately 15-minute session timeout. A Creative Director managing a full campaign workflow from brand creation, campaign generation, channel iteration, and publishing preparation which can easily run for 30 to 45 minutes.

Our solution combines three mechanisms:

- Redis-backed session handles that store reconnection state

- Proactive reconnection at 9.5 minutes, before the timeout fires

- Context window compression (200K to 100K tokens) to maintain conversation history across long sessions

Audio flows as raw PCM 16kHz mono in from the browser, 24kHz mono out from the agent over a mixed binary and JSON WebSocket protocol. Binary frames carry audio. JSON frames carry page context, transcripts, and UI commands like navigation directives. The frontend renders a breathing orb animation synchronized with the agent's voice activity.

Google Cloud Infrastructure

BrandForge is managed entirely on Google Cloud, deployed via Terraform Infrastructure-as-Code (IaC):

- Cloud Run API service (FastAPI, 1 vCPU, 512Mi, 0–3 autoscaled instances) and Worker service (Celery, 2 vCPU, 4Gi, threads pool, always-on)

- Cloud SQL PostgreSQL 16 on a private IP, 11 tables with UUID primary keys and JSONB columns for brand data and Framer output

- Memorystore Redis 7, serving as the Celery task broker, real-time pub/sub event bus, session state store, and rate limiter

- Cloud Storage all generated assets (images, videos, audio) with 30-day lifecycle policies

- Secret Manager API keys, database credentials, JWT secrets

- Artifact Registry Docker image storage

- Firebase Next.js frontend app hosting

The backend is async Python throughout. FastAPI with SQLAlchemy 2.0's async engine using asyncpg, Celery for distributed task processing across three dedicated queues (framer, channels, celery, publisher), and Redis pub/sub for real-time event streaming.

Real-Time Streaming: The Glue

The user experience depends entirely on streaming. Nobody wants to watch a spinner for 60 seconds while 14 channels generate.

Our streaming pipeline:

- A Celery task completes generating an asset (image, video, or audio)

- The task publishes a typed event to Redis pub/sub -

channel.asset,channel.completed,framer.completed, etc. - A WebSocket endpoint subscribes to the campaign's Redis channel and relays events to the frontend

- The Mosaic Canvas renders each asset the instant it arrives

Channels complete at different speeds a Twitter image might take 10 seconds; a YouTube video might take 45. The arrival-order streaming creates organic momentum as the campaign materializes card by card. Users watch their entire campaign come to life in real time.

The Architectural Principles at Work

Building BrandForge reinforced several principles that sit at the core of BroadComms' approach to enterprise AI architecture.

Match the model to the task. We used gemini-2.5-pro for deep strategic reasoning, Flash models for fast parallel execution, Veo for video, and native audio models for voice synthesis and speech. Using the most capable model for everything is the most expensive path to mediocre results. Specialization wins.

Separate planning from execution. The two-stage Framer/Generator architecture ensures that creative strategy is consistent across all 14 channels because they all work from the same plan, while execution is fully parallelized. The creative consistency comes from the shared plan, not from 14 generators independently interpreting a brief.

Multimodal prompting transforms output quality. Sending the brand's actual logo and product images alongside text prompts produces fundamentally better brand-consistent output than describing the brand in words. The models understand visual context natively use it.

Redis pub/sub is the right abstraction for AI streaming. It decouples generation from delivery cleanly. Each generator publishes events without knowing who is listening. The WebSocket subscribes without knowing what is generating. This separation made the system significantly easier to scale, debug, and extend.

Design for session continuity in voice agents. Voice interaction happens over time. A 10-minute timeout is not a constraint you can ignore you need to architect around it with proactive reconnection, session resumption, and context compression. Building this correctly from the start is far less painful than retrofitting it.

What's Next for BrandForge

The hackathon forced rapid scope discipline, but the roadmap is clear.

Direct Platform Publishing. The OAuth infrastructure for 20+ platforms LinkedIn, Twitter, Instagram, TikTok, YouTube, Pinterest, Reddit, Discord, Slack, and more is already built and tested. The final mile to each platform's publishing API is the next release milestone.

Campaign Analytics. Tracking published campaign performance and feeding engagement data back into the generation pipeline, so BrandForge learns which creative directions perform best for each brand and audience segment over time.

Collaborative Workspaces. Multi-user brand workspaces with role-based access (RBAC), shared campaign libraries, and approval workflows evolving BrandForge from a solo creative studio into a team platform.

Expanded Channel Coverage. The @register_channel decorator pattern makes adding new channels trivial. Google Ads, WhatsApp Business, Telegram, Snapchat, and long-form YouTube video are all planned.

Try It

BrandForge demo is live for testing and accessible. You can explore the platform today at brandforge.boilerplate.online.

If you are building with Gemini's multimodal capabilities, we hope the techniques documented here, especially hex-to-color-name sanitization for Veo, multipart brand asset prompting, Redis-backed session resumption, and the Framer/Generator two-stage architecture for content creation save you time and production pain.

At BroadComms, everything we build is an expression of what we advocate for enterprise clients: purposeful AI architecture, the right model for the right task, and systems designed to scale without sacrificing governance. BrandForge is that philosophy applied to marketing orchestration.

This post was written for the Google Gemini Live Agent Challenge. BrandForge is BroadComms' entry in the Creative Storyteller category. #GeminiLiveAgentChallenge